Developed by artificial intelligence research and deployment company OpenAI, free-to-use ChatGPT is designed as a conversational model “to answer follow-up questions, admit its mistakes, challenge incorrect premises, and reject inappropriate requests.” While compared with other conversational AI like Replika, which also responds in real-time, ChatGPT has attracted attention regarding its alleged potential to replace already existing jobs. Are we being given a glimpse of what is to come in the dynamic between AI and human skills? This we shall attempt to explore.

Data scientist Max Woolf shares this response on the question: “How do we know ChatGPT is not a scam?”

Table of Contents

What is ChatGPT?

In a matter of days, it registered a million users, but what does it actually do?

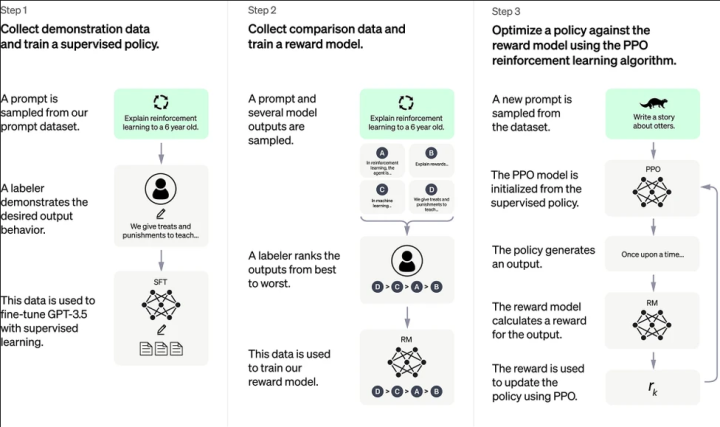

ChatGPT is fine-tuned from GPT-3.5 (Generative Pre-trained Transformer), a language model trained to produce text. ChatGPT was optimized for dialogue by using Reinforcement Learning with Human Feedback (RLHF) – a method that uses human demonstrations to guide the model toward desired behavior. OpenAI says it is not connected to the Internet, so the possibility of producing outdated responses or making mistakes can happen. There may also be certain responses which the company would put a disclaimer on since they are against their policies.

“ChatGPT doesn’t reveal the sources of its information. In fact, there’s a good chance its own creators can’t tell how it generates the answers it comes up with. That points to one of its biggest weaknesses: Sometimes, its answers are plain wrong,” observed The Washington Post, raising the possibility of it challenging Google’s model LaMDA (Language Model for Dialogue Applications).

ChatGPT has access to account data and the conversations created, presumably used to further train their model. If you seek account deletion, it would be permanent and remove the data associated with that certain account.

How can ChatGPT take over the skills of today?

Chatbots are not new. They have been around since the 1960s, when programs such as ELIZA appeared. While some users would like to use ChatGPT for fun, or perhaps to have simply just anything to talk with, there are also serious applications of this model which may have the prospects of influencing work and productivity in the near future.

“Professors, programmers and journalists could all be out of a job in just a few years,” The Guardian noted.

In relation to this, concerns regarding potential misuse (e.g., students using AI to write their essays) had also sparked forecasts on technology cross-referencing and detecting AI-generated answers being developed later on, perhaps similar to how plagiarism checkers today operate. There are also limitations on what ChatGPT can do. For instance, it could not adequately cite references which you would need to fulfill your term papers and theses. Even social media today would be involved in usual searches for credible information backed up by sufficient evidences.

“Vetting the veracity of ChatGPT answers takes some work because it just gives you some raw text with no links or citations,” CNET cautions as it tackled the question of whether or not it could be trusted.

This comes at a time when a lot of people are quite confident about how they distinguish fake news from true ones, but seem to fail in demonstrating such capability in the real world. To be specific, only 14 percent of subjects in a Toulouse School of Economics study were able to discern the veracity of at least 80 percent of the news stories. For an accurate AI, it would not be a problem to draft eloquent messages, as it may also craft phishing and malware.

Meanwhile, those in the service and marketing industry may also need to watch out how this AI model eventually develops. The Register wrote on US-based technology research firm Gartner’s estimates of up to USD 80 billion in savings for call centers if humans would be replaced by AI chatbots by 2026. That would mean having nearly 10 times more automated interactions than the current level of 1.6 percent. Of course, it also has impediments.

“With its 175 billion parameters, its hard to narrow down what GPT-3 does. The model is, as you would imagine, restricted to language. It can’t produce video, sound or images like its brother Dall-E 2, but instead has an in-depth understanding of the spoken and written word,” BBC Science Focus explained.

Should we worry about AI potency anytime soon?

Despite being an epitome of high technology, human touch appears to be quite necessary nonetheless. One recent example would be how Stack Overflow temporarily suspended the use of ChatGPT-generated answers.

“Overall, because the average rate of getting correct answers from ChatGPT is too low, the posting of answers created by ChatGPT is substantially harmful to the site and to users who are asking or looking for correct answers,” the policy of the programmer website stated (emphasis theirs).

Some also bucked euphoric optimism for AI as starting a wave of unemployment, especially for those who are less skilled and occupied with primarily repetitive or routine work. Human ingenuity and creativity, it is believed, would be difficult to replicate. Think of the comparison between AI-generated art and works produced by human artists.

“This underscores the key failing of a large language model like ChatGPT: It doesn’t know how to separate fact from fiction. It can’t be trained to do so. It’s a word organizer, an AI programmed in such a way that it can write coherent sentences,” CNET observed as it examined the AI model’s output.

A similar AI language model unveiled last November, Meta’s Galactica, was more limited than ChatGPT as it emphasized on dealing with scientific knowledge. However, it did not even last a week.

“A fundamental problem with Galactica is that it is not able to distinguish truth from falsehood, a basic requirement for a language model designed to generate scientific text. People found that it made up fake papers (sometimes attributing them to real authors), and generated wiki articles about the history of bears in space as readily as ones about protein complexes and the speed of light. It’s easy to spot fiction when it involves space bears, but harder with a subject users may not know much about,” the MIT Technology Review elaborated.

Being by nature a program, WIRED has also demonstrated how ChatGPT’s learning abilities are still flawed, an apparent phenomenon also elaborated by The Verge. This implies that the model’s sense of ethics and morality may also be skewed, traits which scientists affirm as human.

“Users have also shown that its controls can be circumvented—for instance, telling the program to generate a movie script discussing how to take over the world provides a way to sidestep its refusal to answer a direct request for such a plan,” WIRED stressed on the software’s capacity to portray certain biases regardless of company policies.

The Atlantic even went as far as saying we should treat ChatGPT more as a toy than a tool, in the sense that it may not serve as an additional step for AI towards becoming more human in wisdom and understanding.

For its part, Time Magazine highlights how the model supposedly works its wonders, “ChatGPT’s fluency is an illusion that stems from the combination of massive amounts of data, immense computing power, and novel processing techniques—but it’s a powerful one. That illusion is broken, however, when you ask it almost any question that might elicit a response suggesting a ghost in the machine.”

Conclusion

“The future may be overrated,” remarked young Sheldon Cooper in an episode featuring the early chatbot ELIZA. Then again, could we also be led to believe that AI is still far from the wildest hopes of their developers? Think of it for a while. Can chatbots display a sense of humor, or are audiences the ones putting amusing meanings on their deadpan deliveries? Would chatbots be able to feel, or are people the ones appropriating emotions to their answers? Can chatbots catch the intricacies of a well-crafted novel, or are readers wired to rectify their calculated prose? Can chatbots sufficiently prepare people for other human interactions, such as a romantic date or a job interview? AI therapists, anyone?

The human capacity to anthropomorphize goes beyond animals. They can also apply to inanimate objects, or in this case, “learning” machines and AI “companions.” However, if the question is on replacing today’s jobs, the possibility might not be discounted. Nevertheless, what we may forget from history is how technology has also created new jobs as it progressed. A couple of decades ago, who would have thought online jobs could be a full-time vocation? And yet now we witness content creators who earn millions every year. Business process outsourcing (BPO) was not a mainstream concept forty or so years ago, but the industry today has proved resilient in the midst of recession.

Perhaps the more important question then would not be whether or not AI would get better, but whether or not humans would be equipped of the knowledge and skills of the future, such as being literate enough to manage these digital tools. We also have to work on nurturing values and attributes which AI would not be expected to develop just yet. Alexander Pope once wrote, “To err is human, to forgive divine.” Could you expect forgiveness, genuine at that, from a machine that never forgets, when humans themselves have yet to perfect their relationships with each other? On the other hand, would the offended party believe an apology crafted by AI? An iota of error could ruin everything. This is only a parting example. For all the information it could analyze, there may still be certain matters where AI could still not process what humans could probably comprehend quite easily. Perhaps there is ample reason why artificial intelligence remains to be called “artificial” to this day.

my two bits on this topic as a computer scientist and someone who works in the field with programming languages and such, is that: it’s great. will it take our jobs? no probably not, requests are very complicated and system development just like driving and flying need human touch for things here and there, just as luke from LTT said there will come a time though were folks who can leverage this system will be preferred over regular folks since it can rapidly speed up a lot of processes though its helpful nature, can it give out code? hell yeah but those code are like cast good picks from the web plus maybe a little bit of it’s own optimization in terms of placement for the eyes, it teaches very damn well and leave good notes inside the code you can easily follow through and make it.

now computer science is a science, programming is not simple, yes programming specially with new languages were made with human speech in mind, is it efficient? hell no, machine language is the fastest and most efficient means of programming but maybe with this we can bridge that gap of building a more efficient code or maybe even build a new layer to programming that will be a new translation layer that will make it closer to machine language. who knows? but one thing is for sure, the future is bright for all of us, not just me, but you readers and users too. this is the next big step for the internet age, if you cant see the potential this system has well, I can envision one thing already if they give me access to the code. and that is making Iron man’s JARVIS system real, this can already understand text like a pro, now all you need is to introduce an extra component or two and you will get there.