Apple has just announced new features to protect children from online threats that will be coming to its platforms with software updates later this year. This includes enhanced safety features in Messages, enhanced detection of Child Sexual Abuse Material by scanning content in iCloud, and an updated Siri and Search.

Messages

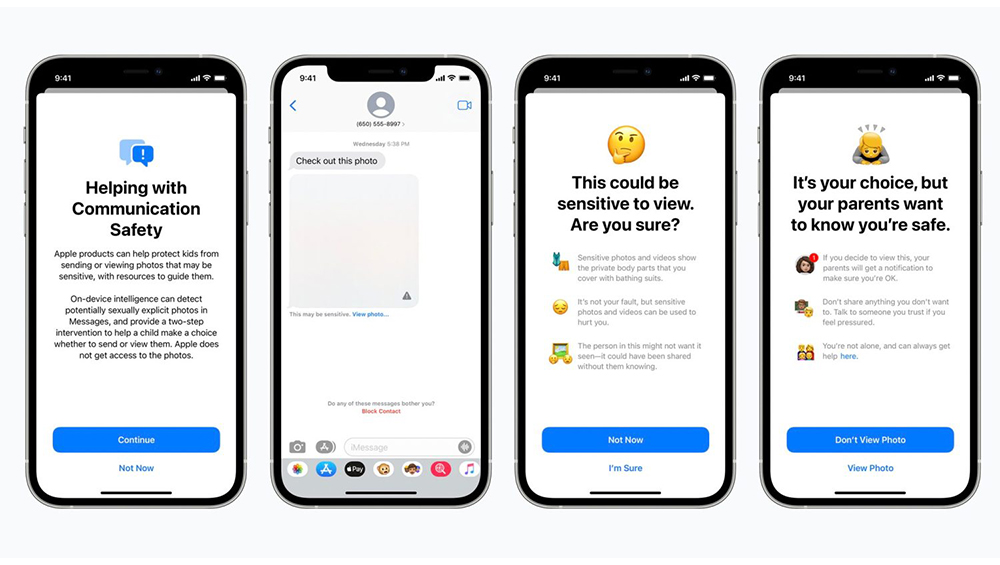

Messages on the iPhone, iPad, and Mac will be getting new Communication Safety features to warn children and their parents when receiving or sending sexually explicit photos. Apple said that when a child receives an explicit image, the image will be blurred and the Messages app will display a warning saying the image “may be sensitive”.

The pop-up will also explain that if the child decides to view the image, their iCloud Family parent will receive a notification “to make sure you’re OK”. The pop-up will also include a link for additional help. A similar warning will come up as well when a child is about to send a sexually explicit photo. A parent will receive a warning if the child will choose to send it.

Apple has said that they will use on-device machine learning to analyze images and will determine if a photo is sexually explicit. iMessage, Apple says is end-to-end encrypted and Apple does not see or have access to any of your messages. The new feature will be an opt-in feature.

Scanning Photos for Child Sexual Abuse Material (CSAM)

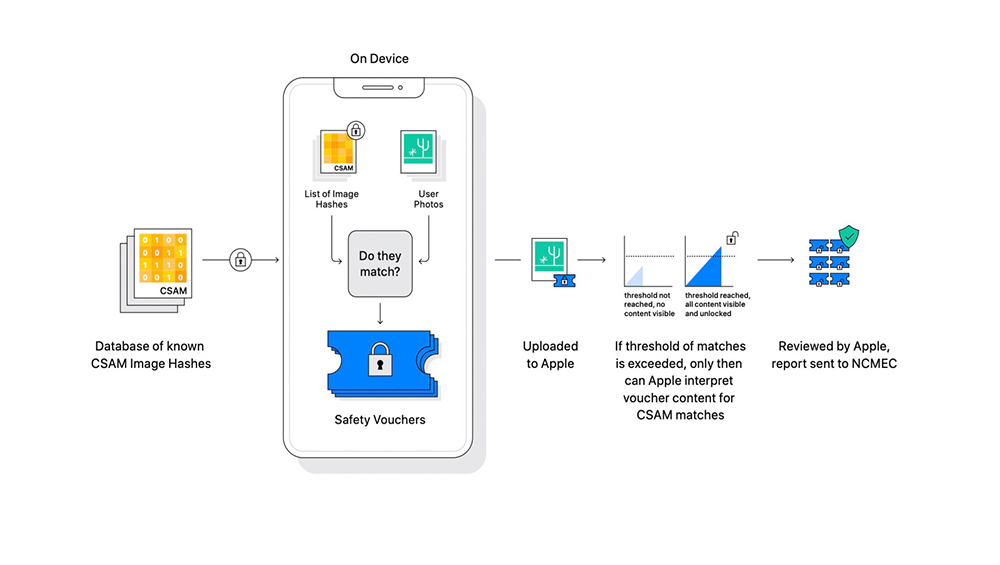

Starting with iOS 15 and iPadOS 15, Apple will be able to detect known CSAM material in iCloud Photos, allowing Apple to report these to the National Center for Missing and Exploited Children, a non-profit organization that works in collaboration with U.S. law enforcement agencies.

Apple emphasized that its method of detecting CSAM material is designed with user privacy in mind. Apple analyzes images on your device to see if there is a match against a database of known CSAM images. All matching is done on device.

Siri and Search

Apple also announced that it will be expanding guidance in Siri and Spotlight Search across devices by providing additional resources to help children and parents stay safe online and get help with unsafe situations. Users will be able to ask Siri how they can report CSAM or child exploitation and will be pointed to resources for where and how to file a report. Siri and Search are also being updated to intervene when users perform searches related to CSAM.

All of these updates are coming later this year and will be available first in the US.

Source: Apple

0 Comments

Leave a Reply