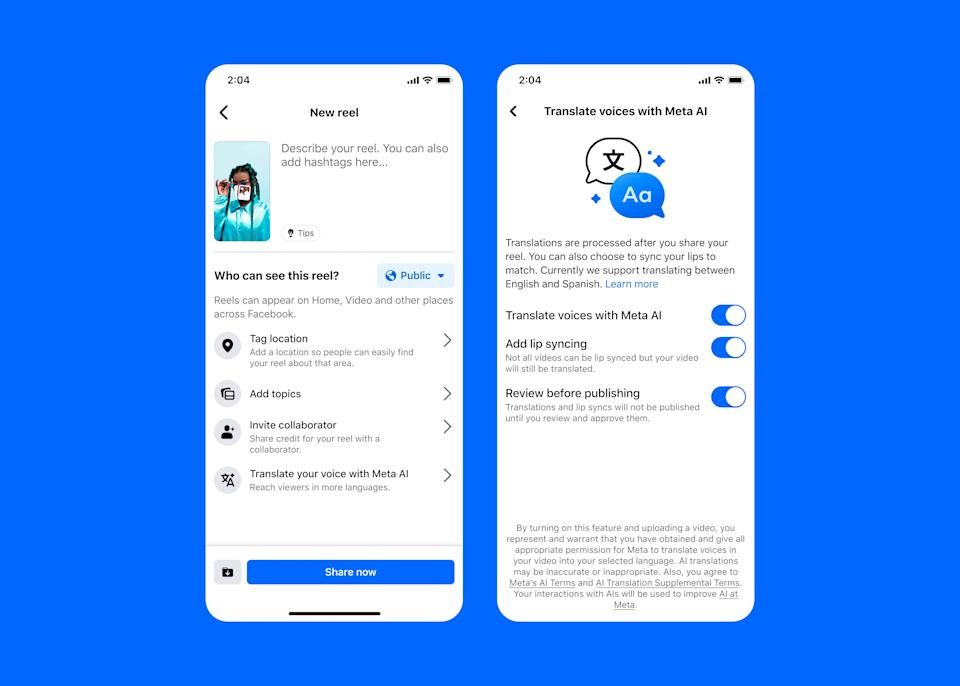

Meta has rolled out a new AI voice dubbing feature globally, allowing users to translate their voice in Reels with an optional lip-syncing tool.

The AI generates a translated voice track based on the user’s tone, and if lip-syncing is enabled, it adjusts the mouth movements to match the translated language. Users can review the translated version before posting, and viewers will see a tag noting that the clip was ‘AI-translated’.

It currently supports up to two speakers in a clip. Creators can also check a tracker that shows how their dubbed videos perform in different languages.

Meta recommends using the tool mainly for face-to-camera videos. The tool may not work properly if there are mouth obstructions, heavy background music, or overlapping speech.

At launch, translations only work between English and Spanish, though Meta says more languages will be added in the future. For now, the tool is available to Facebook creators with at least 1,000 followers, while anyone with a public Instagram account can try it out.

The feature was first previewed by Mark Zuckerberg during Meta Connect 2024. The move comes as other platforms also explore AI translations. YouTube previously introduced a similar feature in 2024, while Apple built live translation tools into iOS 26 for Messages, Phone, and FaceTime.

0 Comments

Leave a Reply